For a while, I’ve been saying that developers should build great software, and pick their host at the last responsible moment. Apps first, not infrastructure. But now, I don’t think that’s exactly right. It’s naive. As you’re writing code, there are at least three reasons you’ll want to know where you app will eventually run:

- It impacts your architecture. You likely need to know if you’re dealing with a function-as-a-service environment, Kubernetes, virtual machines, Cloud Foundry, or whatever. This changes how you lay out components, store state, etc.

- There are features your app may use. For each host, there are likely capabilities you want to tap into. Whether it’s input/output bindings in Azure Functions, ConfigMaps in Kubernetes, or something else, you probably can take advantage of what’s there.

- It changes your local testing setup. It makes sense that you want to test your code in a production-like environment before you get to production. That means you’ll invest in a local setup that mimics the eventual destination.

If you’re using Kubernetes, you’ve got lots of options to address #3. I took four popular Kubernetes development options for a spin, and thought I’d share my findings. There are more than four options (e.g. k3d, Microk8s, Micronetes), but I had to draw the line somewhere.

For this post, I’m considering solutions that run on my local machine. Developers using Kubernetes may also spin up cloud clusters (and use features like Dev Spaces in Azure AKS), or sandboxes in something like Katacoda. But I suspect that most will be like me, and enjoy doing things locally. Let’s dig in.

Option 1: Docker Desktop

For many, this is the “easy” choice. You’re probably already running Docker Desktop on your PC or Mac.

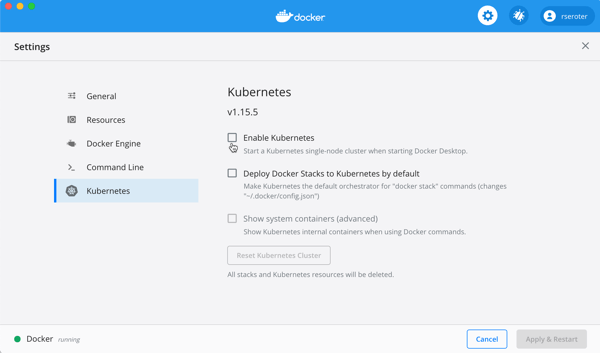

By default, you have to explicitly enable it. The screen below is accessible via the “Preferences” menu.

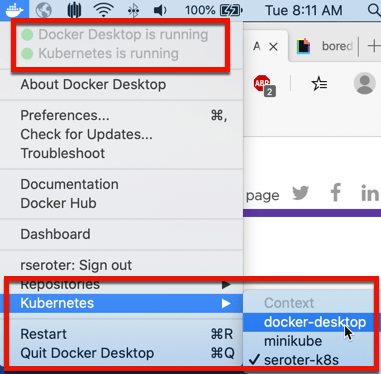

After a few minutes, my cluster was running, and I could switch my Kubernetes context to Docker Desktop environment.

I proved this by running a couple simple kubectl commands that show I’ve got a single node, local cluster.

This cluster doesn’t have the Kubernetes Dashboard installed by default, so you can follow a short set of steps to add it. You can also, of course, use other dashboards, like Octant.

With my cluster running, I wanted to create a pod, and expose it via a service.

My corresponding YAML file is as such:

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: simple-k8s-app

spec:

replicas: 1

selector:

matchLabels:

app: simple-k8s-app

template:

metadata:

labels:

app: simple-k8s-app

spec:

containers:

- name: simple-k8s-app

image: rseroter/simple-k8s-app-kpack:latest

ports:

- containerPort: 8080

env:

- name: FLAG_VALUE

value: "on"

---

apiVersion: v1

kind: Service

metadata:

name: simple-k8s-app

spec:

type: LoadBalancer

ports:

- port: 9001

protocol: TCP

targetPort: 8080

selector:

app: simple-k8s-app

I used the “LoadBalancer” type, which I honestly didn’t expect to see work. Everything I’ve seen online says I need to explicitly set up ingress via NodePort. But, once I deployed, my container was running, and service was available on localhost:9001.

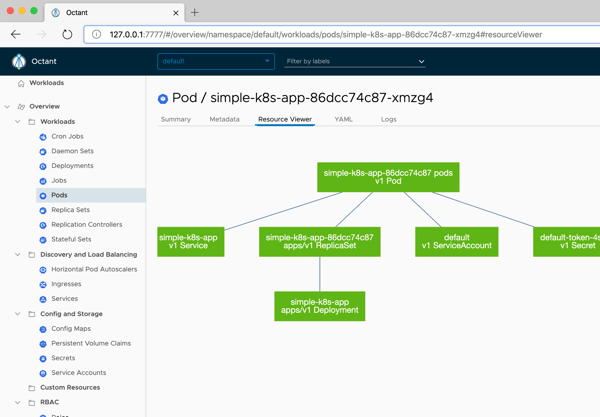

Nice. Now that there was something in my cluster, I started up Octant, and saw my pods, containers, and more.

Option 2: Minikube

This has been my go-to for years. Seemingly, for many others as well. It’s featured prominently in the Kubernetes docs and gives you a complete (single node via VM) solution. If you’re on a Mac, it’s super easy to install with a simple “brew install minikube” command.

To start up Kubernetes, I simply enter “minikube start” in my Terminal. I usually specify a Kubernetes version number, because it defaults to the latest, and some software that I install expects a specific version.

After a few minutes, I’m up and running. Minikube has some of its own commands, like one below that returns the status of the environment.

There are other useful commands for setting Docker environment variables, mounting directories into minikube, tunneling access to containers, and serving up the Kubernetes dashboard.

My deployment and service YAML definitions are virtually the same as the last time. The only difference? I’m using NodePort here, and it worked fine.

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: simple-k8s-app

spec:

replicas: 1

selector:

matchLabels:

app: simple-k8s-app

template:

metadata:

labels:

app: simple-k8s-app

spec:

containers:

- name: simple-k8s-app

image: rseroter/simple-k8s-app-kpack:latest

ports:

- containerPort: 8080

env:

- name: FLAG_VALUE

value: "on"

---

apiVersion: v1

kind: Service

metadata:

name: simple-k8s-app

spec:

type: NodePort

ports:

- port: 9001

protocol: TCP

targetPort: 8080

selector:

app: simple-k8s-app

After applying this configuration, I could reach my container using the host IP (retrieved via “minikube ip”) and generated port number.

Option 3: kind

A handful of people have been pushing this on me, so I wanted to try it out as well. How’s it different from minikube? A few ways. First, it’s not virtual machine-based. The name stands for Kubernetes in Docker, as the cluster nodes are running in Docker containers. Since it’s all local Docker stuff, it’s easy to use your local registry without any extra hoops to jump through. What’s also nice is that you can create multiple worker nodes, so you can test more realistic scenarios. kind is meant to be used by those testing Kubernetes, but you can use it on your own as well.

Installing is fairly straightforward. For those on Mac, a simple “brew install kind” gets you going. When creating clusters, you can simply do “kind create cluster”, or do that with a configuration file to customize the build. I created a simple config that created two control plane nodes, and two workers nodes.

# a cluster with 2 control-plane nodes and 2 workers

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

- role: control-plane

- role: worker

- role: worker

After creating the cluster with that YAML configuration, I had a nice little cluster running inside Docker containers.

It doesn’t look like the UI Dashboard is built in, so again, you can either install it yourself, or point your favorite dashboard at the cluster. Here, Octant shows me the four nodes.

This time, I deployed my pod without a corresponding service. It’s the same YAML as above, but no service definition. Why? Two reasons: (1) I wanted to try port forward in this environment, and (2) ingress in kind is a little trickier than in the above platforms.

So, I got the name of my pod, and tunneled to it via this command:

kubectl port-forward pod/simple-k8s-app-6dd8b59b97-qwsjb 9001:8080

Once I did that, I pinged http://127.0.0.1:9001 and pulled my the app in the container. Nice!

Option 4: Tilt

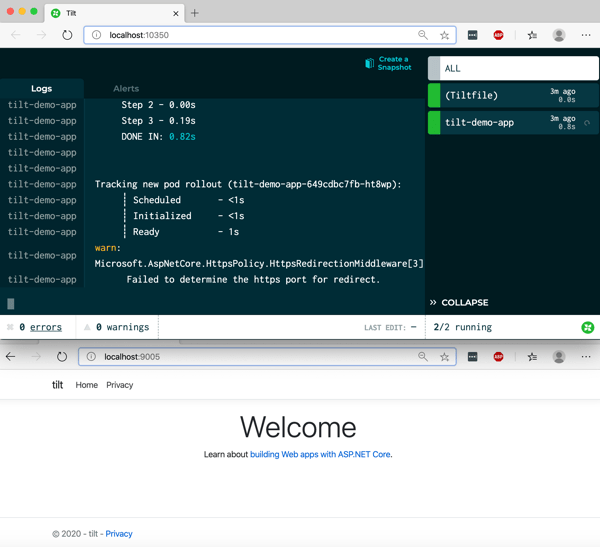

This is a fairly new option. Tilt is positioned as “local Kubernetes development with no stress.” BOLD CLAIM. Instead of just being a vanilla Kubernetes cluster to deploy to, Tilt offers dev-friendly experiences for packaging code into containers, seeing live updates, troubleshooting, and more. So, you do have to bring your Kubernetes cluster to the table before using Tilt.

So, I again started up Docker Desktop and got that Kubernetes environment ready to go. Then, I followed the Tilt installation instructions for my machine. After a bit, everything was installed, and typing “tilt” into my Terminal gave me a summary of what Tilt does, and available commands.

I started by just typing “tilt up” and got a console and web UI. The web UI told me I needed a Tilefile, and I do what I’m told. My file just contained a reference to the YAML file I used for the above Docker Desktop demo.

k8s_yaml('simple-k8s-app.yaml')

As soon as I saved the file, things started happening. Tilt immediately applied my YAML file, and started the container up. In a separate window I checked the state of deployment via kubectl, and sure enough everything was up and running.

But that’s not really the power of this thing. For devs, the fun comes from having the builds automated too. Not just a finished container image. So, I built a new ASP.NET Core app using Visual Studio Code, added a Dockerfile, a put it at the same directory level as the Tiltfile. Then, I updated my Tiltfile to reference the Dockerfile.

k8s_yaml('simple-k8s-app.yaml')

docker_build("tilt-demo-app", "./webapp", dockerfile="Dockerfile")

After saving the files, Tilt got to work and built my image, added it to my local Docker registry, and deployed it to the Kubernetes cluster.

The fun part, is now I could just change the code, save it, and seconds later Tilt rebuilt the container image and deployed the changes.

If your future includes Kubernetes, and it looks like for most, it does, you’ll want a good developer workflow. That means using a decent local experience. You may also use clusters in the cloud to complement the one-premises ones. That’s cool. Also consider how you’ll manage all of them. Today, VMware shipped Tanzu Mission Control, which is a cool way to manage Kubernetes clusters created there, or attached from anywhere. For fun, I attached my existing Azure Kubernetes Services (AKS) cluster, and, the kind cluster we created here. Here’s the view of the kind clusters, with all its nodes visible and monitored.

What else do you use for local Kubernetes development?

Leave a comment