During most workdays, I exist in a state of continuous partial attention. I bounce between (planned and unplanned) activities, and accept that I’m often interrupt-driven. While that’s not an ideal state for humans, it’s a great state for our technology systems. Event-driven applications act based on all sorts of triggers: time itself, user-driven actions, system state changes, and much more. Often, these batch-or-realtime, event-driven activities are asynchronous and coordinated in some way. What options do you have for modeling event-driven processes, and what trade-offs do you make with each option?

Option #1 – Single, Deterministic Process

In this scenario, the event handler is monolithic in nature, and any embedded components are purpose-built for the process at hand. Arguably, it’s just a visually modeled code class. While initiated via events, the transition between internal components is pre-determined. The process is typically deployed and updated as a single unit.

What would you use to build it?

You’ve seen (and built?) these before. A traditional ETL job fits the bill. Made up of components for each stage, it’s a single process executed as a linear flow. I’d also categorize some ESB workflows—like BizTalk orchestrations—in this category. Specifically, those with send/receive ports bound to the specific orchestration, embedded code, or external components built JUST to support that orchestration’s flow.

In any of these cases, it’s hard (or impossible) to change part of the event handler without re-deploying the entire process.

What are the benefits?

Like with most monolithic things, there’s value in (perceived) simplicity and clarity. A few other benefits:

- Clearer sense of what’s going on. When there’s a single artifact that explains how you handle a given event, it’s fairly simple to grok the flow. What happens when sensor data comes in? Here you go. It’s predictable.

- Easier to zero-in on production issues. Did a step in the bulk data sync job fail? Look at the flow and see what bombed out. Clean up any side effects, and re-run. This doesn’t necessarily mean things are easy to fix—frankly, it could be harder—but you do know where things went wrong.

- Changing and testing everything at once. If you’re worried about the side effects of changing a piece of a flow, that risk may lessen when you’re forced to test the whole thing when one tiny thing changes. One asset to version, one asset to track changes for.

- Accommodates companies with teams of specialists. From what I can tell, many large companies still have centers-of-excellence for integration pros. That means most ETL and ESB workflows come out of here. If you like that org structure, then you’ll prefer more integrated event handlers.

What are the risks?

These processes are complicated, not complex. Other risks:

- Cumbersome to change individual parts of the process. Nowadays, our industry prioritizes quick feedback loops and rapid adjustments to software. That’s difficult to do with monolithic event-driven processes. Is one piece broken? Better prioritize, upgrade, compile, test, and deploy the whole thing!

- Non-trivial to extend the process to include more steps. When we think of event-driven activities, we often think of fairly dynamic behavior. But when all the event responses are tightly coordinated, it’s tough to add/adjust/remove steps.

- Typically centralizes work within a single team. While your org may like siloed teams of experts, that mode of working doesn’t lend itself to agility or customer focus. If you build a monolithic event-driven process, expect delivery delays as the work queues up behind constrained developers.

- Process scales as one single unit. Each stage of an event-driven workflow will have its own resource demands. Some will be CPU intensive. Others produce heavy disk I/O. If you have a single ETL or ESB workflow to handle events, expect to scale that entire thing when any one component gets constrained. That’s pretty inefficient and often leads to over-provisioning.

Option #2 – Orchestrated Components

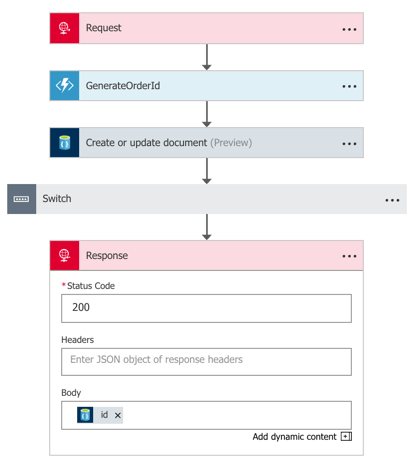

In this scenario, you’ve got a fairly loose wrapper around independent services that respond to events. These are individual components, built and delivered on their own. While still somewhat deterministic—you are still modeling a flow—the events aren’t trapped within that flow.

What would you use to build it?

Without a doubt, you can still use traditional ESB tools to construct this model. A BizTalk orchestration that listens to events and calls out to standalone services? That works. Most iPaaS products also fit the bill here. If you build something with Azure Logic Apps, you’re likely going to be orchestrating a set of services in response to an event. Those services could be REST-based APIs backed by API Management, Azure Functions, or a Service Bus queue that may trigger a whole other event-driven process!

You could also use tools like Spring Cloud Data Flow to build orchestrated, event-driven processes. Here, you chain together standalone Spring Boot apps atop a messaging backbone. The services are independent, but with a wrapper that defines a flow.

What are the benefits?

The main benefits of this model stem from the decoupling and velocity that comes with it. Others are:

- Distributed development. While you still have someone stitching the process together, develop the components independently. And hopefully, you get more people in the mix who don’t even need to know the “wrapper” technology. Or in the case of Spring Cloud Data Flow or Logic Apps, the wrapper technology is dev-oriented and easier to understand than traditional integration systems. Either way, this means more parallel development and faster turnaround of the entire workflow.

- Composable processes. Configure or reconfigure event handlers based on what’s needed. Reuse each step of the event-driven process (e.g. source channel, generic transformation component) in other processes.

- Loose grip on the event itself. There could be many parties interested in a given event. Your flow may be just one. While you could reuse the inbound channel to spawn each event-driven processes, you can also wiretap orchestrated processes.

What are the risks?

You’ve got some risks with a more complex event-driven flow. Those include:

- Complexity and complicated-ness. Depending on how you build this, you might not only be complicated but also complex! Many moving parts, many distributed components. This might result in trickier troubleshooting and less certainty about how the system behaves.

- Hidden dependencies. While the goal may be to loosely orchestrate services in an event-driven flow, it’s easy to have leaky abstractions. “Independent” services may share knowledge between each other, or depend on specific underlying infrastructure. This means that you need good documentation, and services that don’t assume that dependencies exist.

- Breaking changes and backwards compatibility. Any time you have a looser federation of coordinated pieces, you increase the likelihood of one bad actor causing cascading problems. If you have a bunch of teams that build/run services on their own, and one team combines them into an event-driven workflow, it’s possible to end up with unpredictable behavior. Mitigation options? Strong continuous integration practices to catch breaking changes, and a runtime environment that catches and isolates errors to minimize impact.

Option #3 – Choreographed Components

In this scenario, your event-driven processes are extremely fluid. Instead of anything dictating the flow, services collaborate by publishing and subscribing to messages. It’s fully decentralized. Any given services has no idea who or what is upstream or downstream of it. They do their job, and any service that wants to do subsequent work, great.

What would you use to build it?

In these cases, you’re often working with low-level code, not high level abstractions. Makes sense. But there are frameworks out there that make it easier for you if you don’t crave writing to or reading from queues. For .NET developers, you have things like MassTransit or nServiceBus. Those provide helpful abstractions. If you’re a Java developer, you’ve got something like Spring Cloud Stream. I’ve really fallen in love with it. Stream provides an elegant abstraction atop RabbitMQ or Apache Kafka where the developer doesn’t have to know much of anything about the messaging subsystem.

What are the benefits?

Some of the biggest benefits come from the velocity and creativity that stem from non-deterministic event processing.

- Encourages adaptable processes. With choreographed event processors, making changes is simple. Deploy another service, and have it listen for a particular event type.

- Makes everyone an integration developer. Done right, this model lessens the need for a siloed team of experts. Instead, everyone builds apps that care about events. There’s not much explicit “integration” work.

- Reflects changing business dynamics. Speed wins. But not speed for the sake of it. But speed of learning from customers and incorporating feedback into experiments. Scrutinize anything that adds friction to your learning process. Fixed workflows owned by a single team? Increasingly, that’s an anti-pattern for today’s software-driven, event-powered businesses. You want to be able to handle the influx of new data and events and quickly turn that into value.

What are the risks?

Clearly, there are risks to this sort of “controlled chaos” model of event processing. These include:

- Loss of cross-step coordination. There’s value in event-driven workflows that manage state between stages, compensate for failed operations, and sequence key steps. Now, there’s nothing that says you can’t have some processes that depend on orchestration, and some on choreography. Don’t adopt an either-or mentality here!

- Traceability is hairy. When an event can travel any number of possible paths, and those paths are subject to change on a regular basis, auditability can’t be taken for granted! If it takes a long time for an inbound event to reach a particular destination, you’ve got some forensics to do. What part was slow? Did something get dropped? How come this particular step didn’t get triggered? These aren’t impossible challenges, but you’ll want to invest in solid logging and correlation tools.

You’ve got lots of options for modeling event-driven processes. In reality, you’ll probably use a mix of all three options above. And that’s fine! There’s a use case for each. But increasingly, favor options #2 and #3 to give you the flexibility you need.

Did I miss any options? Are there benefits or risks I didn’t list? Tell me in the comments!

Leave a comment