In this series of blog posts, I’m looking at how well some leading cloud providers have embedded their management tools within the Microsoft Visual Studio IDE. In the first post of the series, I walked through the Windows Azure management capabilities in Visual Studio 2012. This evaluation looks at the completeness of coverage for browsing, deploying, updating, and testing cloud services. In this post, I’ll assess the features of the Amazon Web Services (AWS) cloud plugin for Visual Studio.

This table summarizes my overall assessment, and keep reading for my in-depth review.

|

Category

|

AWS

|

Notes

|

|

Browsing

|

| Web applications and files |

|

You can browse a host of properties about your web applications, but cannot see the actual website files themselves. |

| Databases |

|

Excellent coverage of each AWS database; you can see properties and data for SimpleDB, DynamoDB, and RDS. |

| Storage |

|

Full view into the settings and content in S3 object storage. |

| VM instances |

|

Deep view into VM templates, instances, policies. |

| Messaging components |

|

View all the queues, subscriptions and topics, as well as the properties for each. |

| User accounts, permissions |

|

Look through a complete set of IAM objects and settings. |

|

Deploying / Editing

|

| Web applications and files |

|

Create CloudFormation stacks directly from the plugin. Elastic Beanstalk is triggered from the Solution Explorer for a given project. |

| Databases |

|

Easy to create databases, as well as change and delete them. |

| Storage |

|

Create and edit buckets, and even upload content to them. |

| VM instances |

|

Deploy new virtual machines, delete existing one with ease. |

| Messaging components |

|

Create SQS queues as well as SNS Topics and Subscriptions. Make changes as well. |

| User accounts, permissions |

|

Add or remove groups and users, and define both user and group-level permission policies. |

|

Testing

|

| Databases |

|

Great query capability built in for SimpleDB and DynamoDB. Leverages Server Explorer for RDS. |

| Messaging components |

|

Send messages to queues, and send messages to topics. Cannot delete queue messages, or tap into subscriptions. |

Setting up the Visual Studio Plugin for AWS

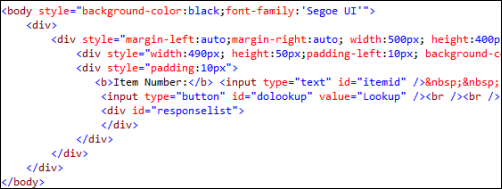

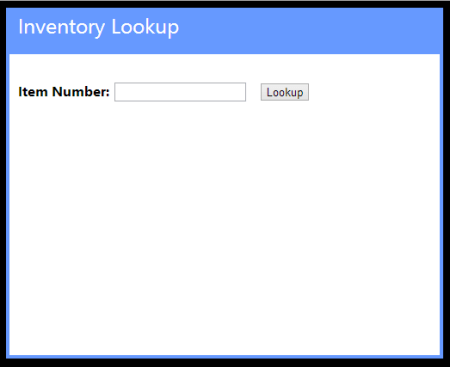

Getting a full AWS experience from Visual Studio is easy. Amazon has bundled a few of the components together, so if you go install the AWS Toolkit for Visual Studio, you also get the AWS SDK for .NET included. The Toolkit works for Visual Studio 2010 and Visual Studio 2012 users. In the screenshot below, notice that you also get access to a set of PowerShell commands for AWS.

Once the Toolkit is installed, you can view the full-featured plugin in Visual Studio and get deep access to just about every single service that AWS has to offer. There’s no mention of the Simple Workflow Service (SWF) and a couple others, but most any service that makes sense to expose to developers is here in the plugin.

To add your account details, simply click the “add” icon next to the “Account” drop down and plug in your credentials. Unlike the cloud plugin for Windows Azure which requires unique credentials for each major service, the AWS cloud uses a single set of credentials for all cloud services. This makes the plugin that much easier to use.

Browsing Cloud Resources

First up, let’s see how easy it is to browse through the various cloud resources that are sitting in the AWS cloud. It’s important to note that your browsing is specific to the chosen data center. If you have US-East chosen as the active data center, then don’t expect to see servers or databases deployed to other data centers.

That’s not a huge deal, but something to keep in mind if you’re temporarily panicking about a “missing” server!

Virtual Machines

AWS is best known for its popular EC2 service where anyone can provision virtual machines in the cloud. From the Visual Studio, plugin, you can browse server templates called Amazon Machine Images (AMIs), server instances, security keys, firewall rules (called Security Groups), and persistent storage (called Volumes).

Unlike the Windows Azure plugin for Visual Studio that populates the plugin tree view with the records themselves, the AWS plugin assumes that you have a LOT of things deployed and opens a separate window for the actual user records. For instance, double-clicking the AMIs menu item launches a window that lets you browse the massive collection of server templates deployed by AWS or others.

The Instances node reveals all of the servers you have deployed within this data center. Notice that this view also pulls in any persistent disks that are used. Nice touch.

In addition to a dense set of properties that you can view about your server, you can also browse the VM itself by triggering a Remote Desktop connection!

Finally, you can also browse Security Groups and see which firewall ports are opened for a particular Group.

Overall, this plugin does an exceptional job showing the properties and settings for virtual machines in the AWS cloud.

Databases

AWS offers multiple database options. You’ve got SimpleDB which is a basic NoSQL database, DynamoDB for high performing NoSQL data, and RDS for managed relational databases. The AWS plugin for Visual Studio lets you browse each one of these.

For SimpleDB, the Visual Studio plugin shows all of the domain records in the tree itself.

Right-clicking a given domain and choosing Properties pulls up the number of records in the domain, and how many unique attributes (columns) there are.

Double-clicking on the domain name shows you the items (records) it contains.

Pretty good browsing story for SimpleDB, and about what you’d expect from a beta product that isn’t highly publicized by AWS themselves.

Amazon RDS is a very cool managed database, not entirely unlike Microsoft Azure for SQL Databases. In this case, RDS lets you deploy managed MySQL, Oracle, and Microsoft SQL Server databases. From the Visual Studio plugin, you can browse all your managed instances and see the database security groups (firewall policies) set up.

Much like EC2, Amazon RDS has some great property information available from within Visual Studio. While the Properties window is expectedly rich, you can also right-click the database instance and Add to Server Explorer (so that you can browse the database like any other SQL Server database). This is how you would actually see the data within a given RDS instance. Very thoughtful feature.

Amazon DynamoDB is great for high-performing applications, and the Visual Studio plugin for AWS lets you easily browse your tables.

If you right-click a given table, you can see various statistics pertaining to the hash key (critical for fast lookups) and the throughput that you’ve provisioned.

Finally, double-clicking a given table results in a view of all your records.

Good overall coverage of AWS databases from this plugin.

Storage

For storage, Amazon S3 is arguable the gold standard in the public cloud. With amazing redundancy, S3 offers a safe, easy way to storage binary content offsite. From the Visual Studio plugin, I can easily browse my list of S3 buckets.

Bucket properties are extensive, and the plugin does a great job surfacing them. Right-clicking on a particular bucket and viewing Properties turns up a set of categories that describe bucket permissions, logging behavior, website settings (if you want to run an entire static website out of S3), access policies, and content expiration policies.

As you might expect, you can also browse the contents of the bucket itself. Here I can see not only my bucket item, but all the properties of it.

This plugin does a very nice job browsing the details and content of AWS S3 buckets.

Messaging

AWS offers a pair of messaging technologies for developers building solutions that share data across system boundaries. First, Amazon SNS is a service for push-based routing to one or more “subscribers” to a “topic.” Amazon SQS provides a durable queue for messages between systems. Both services are browsable from the AWS plugin for Visual Studio.

For a given SNS topic, you can view all of the subscriptions and their properties.

For SQS queues, you can not only see the queue properties, but also a sampling of messages currently in the queue.

Messaging isn’t the sexiest part of a solution, but it’s nice to see that AWS developers get a great view into the queues and topics that make up their systems.

Web Applications

When most people think of AWS, I bet they think of compute and storage. While the term “platform as a service” means less and less every day, AWS has gone out and built a pretty damn nice platform for hosting web applications. .NET developers have two choices: CloudFormation and Elastic Beanstalk. Both of these are now nicely supported in the Visual Studio plugin for AWS. CloudFormation lets you build up sets of AWS services into a template that can be deployed over and over again. From the Visual Studio plugin, you can see all of the web application stacks that you’ve deployed via CloudFormation.

Double-clicking on a particular entry pulls up all the settings, resources used, custom metadata attributes, event log, and much more.

The Elastic Beanstalk is an even higher abstraction that makes it easy to deploy, scale, and load balance your web application. The Visual Studio plugin for AWS shows you all of your Elastic Beanstalk environments and applications.

The plugin shows you a ridiculous amount of details for a given application.

For developers looking at viable hosting destinations for their web applications, AWS offers a pair of very nice choices. The Visual Studio plugin also gives a first-class view into these web application environments.

Identity Management

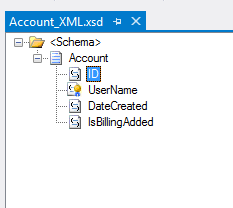

Finally, let’s look at how the plugin supports Identity Management. AWS has their own solution for this called Identity and Access Management (IAM). Developers use IAM to secure resources, and even access to the AWS Management Console itself. From within Visual Studio, developers can create users and groups and view permission policies.

For a group, you can easily see the policies that control what resources and fine-grained actions users of that group have access to.

Likewise, for a given user, you can see what groups they are in, and what user-specific policies have been applied to them.

The browsing story for IAM is very complete and make it easy to include identity management considerations in cloud application design and development.

Deploying and Updating Cloud Resources

At this point, I’ve probably established that the AWS plugin for Visual Studio provides an extremely comprehensive browsing experience for the AWS cloud. Let’s look at a few changes you can make to cloud resources from within the confines of Visual Studio.

Virtual Machines

For EC2 virtual machines, you can pretty much do anything from Visual Studio that you could do from the AWS Management Console. This includes launching instances of servers, changing running instance metadata, terminating existing instances, adding/detaching storage volumes, and much more.

Heck, you can even modify firewall policies (security groups) used by EC2 servers.

Great story for actually interacting with EC2 instead of just working with a static view.

Databases

The database story is equally great. Whether it’s SimpleDB, DynamoDB, or RDS, you can easily create databases, add rows of data, and change database properties. For instance, when you choose to create a new managed database in RDS, you get a great wizard that steps you through the critical input needed.

You can even modify a running RDS instance and change everything from the server size to the database platform version.

Want to increase the throughput for a DynamoDB table? Just view the Properties and dial up the capacity values.

The database management options in the AWS plugin for Visual Studio are comprehensive and give developers incredible power to provision and maintain cloud-scale databases from within the comfort of their IDE.

Storage

The Amazon S3 functionality in the Visual Studio plugin is great. Developers can use the plugin to create buckets, add content to buckets, delete content, set server-side encryption, create permission policies, set expiration policies, and much more.

It’s very useful to be able to fully interact with your object storage service while building cloud apps.

Messaging

Developers building applications that use messaging components have lots of power when using the AWS plugin for Visual Studio. From within the IDE, you can create SQS queues, add/edit/delete queue access policies, change timeout values, alter retention periods, and more.

Similarly for SNS users, the plugin supports creating Topics, adding and removing Subscriptions, and adding/editing/deleting Topic access policies.

Once again, most anything you can do from the AWS Management Console with messaging components, you can do in Visual Studio as well.

Web Applications

While the Visual Studio plugin doesn’t support creating new Elastic Beanstalk packages (although you can trigger the “create” wizard by right-clicking a project in the Visual Studio Solution Explorer), you still have a few changes that you can make to running applications. Developers can restart applications, rebuild environments, change EC2 security groups, modify load balancer settings, and set a whole host of parameter values for dependent services.

CloudFormation users can delete deployed stacks, or create entirely new ones. Use an AWS-provided CloudFormation template, or reference your own when walking through the “new stack” wizard.

I can imagine that it’s pretty useful to be able to deploy, modify, and tear down these cloud-scale apps all from within Visual Studio.

Identity Management

Finally, the IAM components of the Visual Studio plugin have a high degree of interactivity as well. You can create groups, define or change group policies, create/edit/delete users, add users to groups, create/delete user-specific access keys, and more.

Testing Cloud Resources

Here, we’ll look at a pair of areas where being able to test directly from Visual Studio is handy.

Databases

All the AWS databases can be queried directly from Visual Studio. SimpleDB users can issue simple query statements against the items in a domain.

For RDS, you cannot query directly from the AWS plugin, but when you choose the option to Add to Server Explorer, the plugin adds the database to the Visual Studio Server Explorer where you can dig deeper into the SQL Server instance. Finally, you can quickly scan through DynamoDB tables and match against any column that was added to the table.

Overall, developers who want to integrate with AWS databases from their Visual Studio projects have an easy way to test their database queries.

Messaging

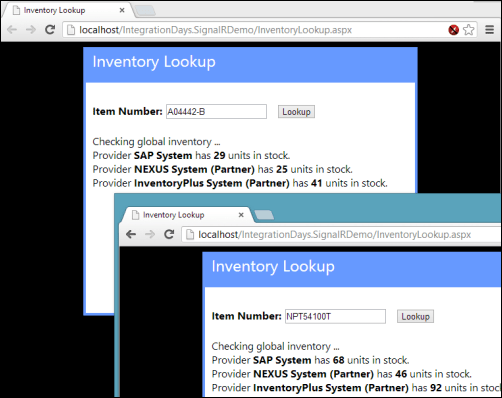

Testing messaging solutions can be a cumbersome activity. You often have to create an application to act as a publisher, and then create another to act as the subscriber. The AWS plugin for Visual Studio does a pretty nice job simplifying this process. For SQS, it’s easy to create a sample message (containing whatever text you want) and send it to a queue.

Then, you can poll that queue from Visual Studio and see the message show up! You can’t delete messages from the queue, although you CAN do that from the AWS Management Console website.

As for SNS, the plugin makes it very easy to publish a new message to any Topic.

This will send a message to any Subscriber attached to the Topic. However, there’s no simulator here, so you’d actually have to set up a legitimate Subscriber and then go check that Subscriber for the test message you sent to the Topic. Not a huge deal, but something to be aware of.

Summary

Boy, that was a long post. However, I thought it would be helpful to get a deep dive into how AWS surfaces its services to Visual Studio developers. Needless to say, they do a spectacular job. Not only do they provide deep coverage for nearly every AWS service, but they also included countless little touches (e.g. clickable hyperlinks, right-click menus everywhere) that make this plugin a joy to use. If you’re a .NET developer who is looking for a first-class experience for building, deploying, and testing cloud-scale applications, you could do a lot worse than AWS.