I enjoyed 2014 and spent much of the year learning new things and building up an exceptional Product team at CenturyLink. Additionally, I spoke at events in Seattle, London, Houston, Ghent, Utrecht, and Oslo, delivered Pluralsight courses about Personal Productivity and DevOps, and tech-reviewed a book about SIgnalR. Below are some of the writing projects I enjoyed most this year, and a list of the best books I read this year.

Favorite Blogs and Articles

After seven years, I still look forward to adding pieces to this blog, in addition to writing for InfoQ, my company’s blog, and a few other available outlets.

- [My Blog] 8 Characteristics of our DevOps Organization. This was by far the most popular blog post that I wrote this year. Apparently it struck a chord.

- [Forbes] Get Ready For Hybrid Cloud. This Forbes piece kicked off a series of posts about Hybrid Cloud and practical advice for those planning these deployments.

- [CTL Blog] Recognizing the Challenges of Hybrid Cloud: Part I, Part II, Part III, Part IV. In this four-part series, I looked at all sorts of hybrid cloud challenges (e.g. security, data integration, system management, skillset) and proven solutions for each.

- [InfoQ] I ran a program about cloud management at scale, and really enjoyed conducting this interview with three very smart folks.

- [My Blog] Richard’s Top 10 Rules for Meeting Organizers. The free-wheeling startup days are over for me, but I’ve kept that mindset even as I work for a much larger company now. Meetings are a big part of most company cultures, but your APPROACH to them is what determines whether meetings are soul-sucking endeavors, or super-useful exercises.

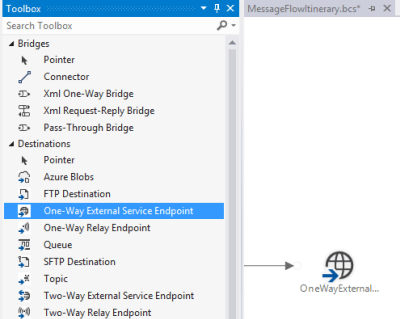

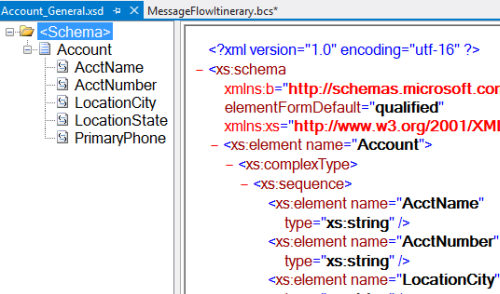

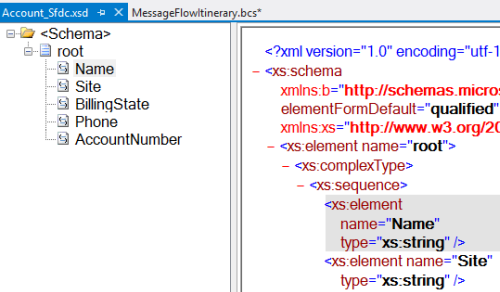

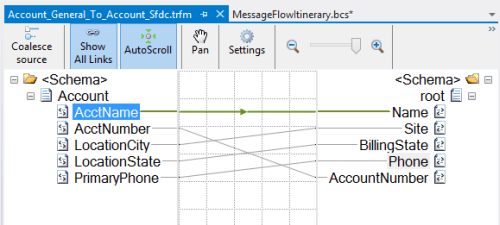

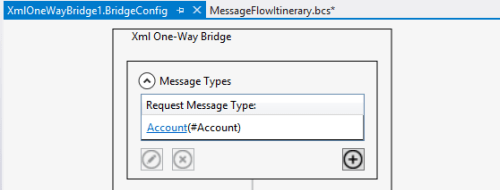

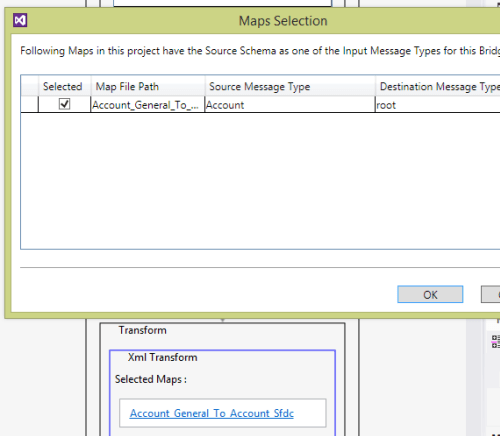

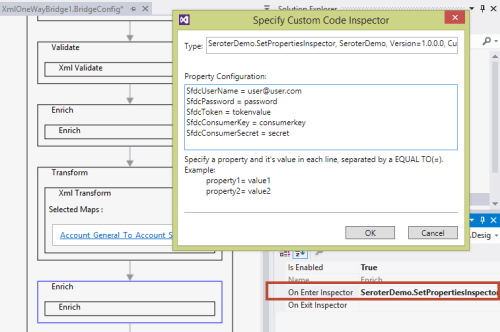

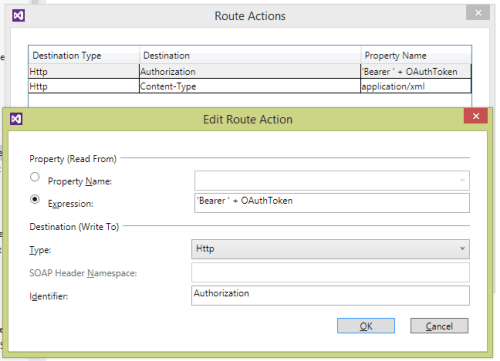

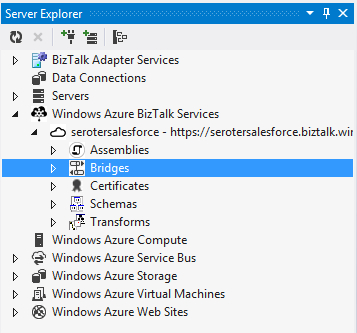

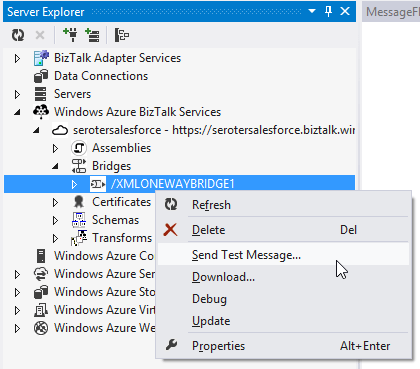

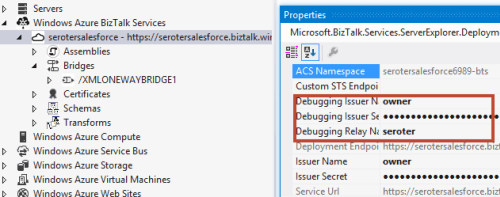

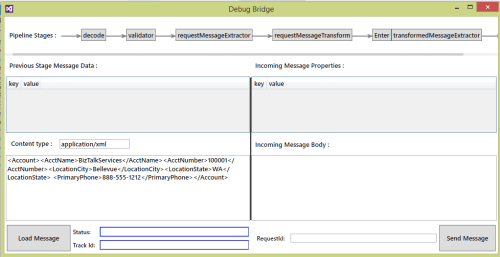

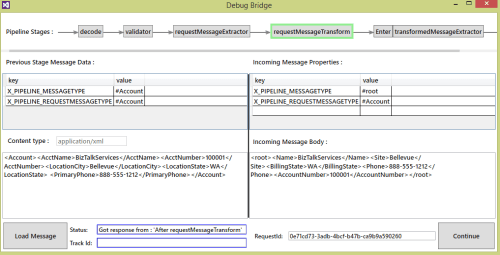

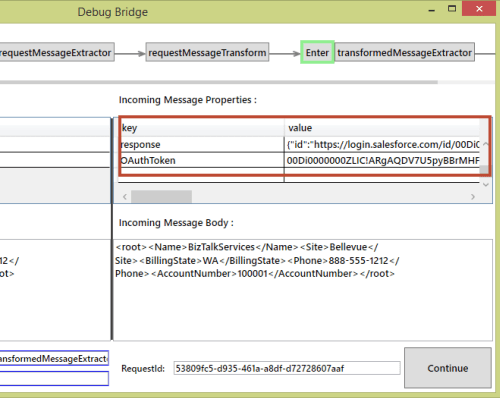

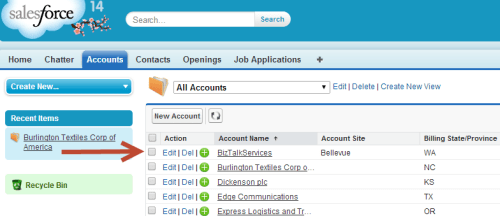

- [My Blog] Integrating Microsoft Azure BizTalk Services with Salesforce.com. I don’t know the real future of BizTalk Services, but that didn’t prevent me from taking it for a spin. Here, I show how to call a Salesforce.com endpoint from a BizTalk Services process.

- [My Blog] Using SnapLogic to Link (Cloud) Apps. Cloud-based integration continues to gain attention, and SnapLogic is doing some pretty cool stuff. I played around with their toolset a bit, and wrote up this summary.

- [My Blog] Call your CRM Platform! Using an ASP.NET Web API to Link Twilio and Salesforce.com. Twilio is pretty awesome, so I take any chance I can to bake it into a demo. For a Salesforce-sponsored webinar, I built up a demo that injected voice interactions into a business flow.

- [My Blog] DevOps, Cloud, and the Lean “Wheel of Waste.” I’ve admittedly spent more time this year thinking about organizational structures and business success, so this post (and others) reflect that a bit. Here, I look at all the various “wastes” that exist in a process, and how cloud computing can help address them.

- [My Blog] Comparing Cloud Provisioning, Scaling, Management. I love hands-on demos. In these three posts, I created resources in five leading clouds and tried out all sorts of things.

- [My Blog] Data Stream Processing with Amazon Kinesis and .NET Applications. There are so many cool stream-processing technologies out today! Microsoft shipped the Event Hubs over the summer, and AWS launched Kinesis a half-year before that. In this post, I took a look at Kinesis and got a sample app up and running.

- [CTL Blog] Our First 140 Days as CenturyLink Cloud. This was my opportunity to reflect on our company progress six months after being acquired by CenturyLink.

Favorite Books

Of the couple dozen books that I read this year, these stood out the most.

- Competing Against Time: How Time-Based Competition is Shaping Global Markets. This book may be twenty five years old, but it’s still pretty awesome. The author’s thesis is that “providing the most value for the lowest cost in the least amount of time is the new pattern for corporate success.” The book focuses on how time is a competitive advantage that improves productivity and responsiveness. Feels as true today as it was in 1990.

- How to Fail at Almost Everything and Still Win Big. Love him or hate him (I happen to love him), Dilbert-creator Scott Adams is someone who challenges convention. In this enjoyable read, Adams delightfully recounts his many failures and his idea that “one should have a system instead of a goal.” Lots of interesting advice that will make you think, even if you disagree.

- Start With Why: How Great Leaders Inspire Everyone to Take Action. The author states that “people don’t buy WHAT you do, they buy WHY you do it.” He then proceeds to explain how companies fail to motivate staff and engage customers by focusing on the wrong things. The best leaders, in Sinek’s view, have a clear sense of “why,” and motivate customers and employees alike. Good read and useful insight for existing and aspiring leaders.

- The Practice of Cloud System Administration: Designing and Operation Large Distributed Systems, Volume 2. If you are doing ANYTHING related to distributed systems today, you really need to read this book. I reviewed it for InfoQ (and interviewed the authors), and found it to be an extremely easy to follow, practical book that covers a wide range of best practices for building and maintaining complex systems.

- Creativity, Inc: Overcoming the Unseen Forces that Stand in the Way of True Inspiration. Probably the best “DevOps” book that I read all year, even though it has nothing to do with technology. This story from the founder of Pixar is fantastic. His thesis is that “there are many blocks to creativity, but there are active steps we can take to protect the creative process.” There’s some great insight into Pixar’s founding, and some excellent lessons about cross-functional teams, eliminating waste, and empowering employees.

- The Art of War. A classic strategy book that I hadn’t read before. It’s a straightforward – but thoughtful – guide to military strategy that relates directly to modern business strategy.

- Service Innovation: How to Go from Customer Needs to Breakthrough Services. What does it actually mean to bring innovation to a service? This book looks at customer-centric ways to identify unmet needs and deliver outcomes that the customer would consider a success. Very helpful perspective for those delivering any type of service to a (internal or external) customer base.

- The Assist: Hoops, Hope, and the Game of Their Lives. Phenomenal story about a basketball coach who was all-consumed with ensuring that his underprivileged student-athletes found success on and off the court.

- The Box: How Shipping Containers Made the World Smaller and the World Economy Bigger. I can’t tell you how many times my wife asked me “why are you reading a book about shipping containers?” I loved this book. It looks at how a “simple” innovation like a shipping container fundamentally changed the world’s economy. It’s a well-written historical account with lessons on disruption that apply today.

- Mark Twain: A Life. This was a long, detailed book for someone with a long, adventurous life. A fascinating account of this period, the book recounts Twain’s colorful rise to global renown as a leading literary voice of America.

- The Goal: A Process of Ongoing Improvement. The excellent DevOps book “The Phoenix Project” owes a lot to this book. The Goal tells a fictitious tale of a manufacturing plant manager who faces higher expenses and long cycle times. The hero discovers new ways to optimize an entire system by digging into bottlenecks, capacity management, batch sizing, quality, and much more. It’s a fun read with tons of lessons for software practitioners who face similar challenges, and can apply similar solutions.

- Scaling Up Excellence: Getting to More Without Settling for Less. Spreading a culture of excellence to more people in more places requires hard work, relentless focus, a shared mindset, and amazing discipline. This book is full of case studies and guidance for successful (and unsuccessful!) scaling.

- Jim Henson: The Biography. An outstanding, meticulously-detailed biography of one of the most talented and creative individuals of the last century. Great insight into team-building, quiet leadership, and taking risks.

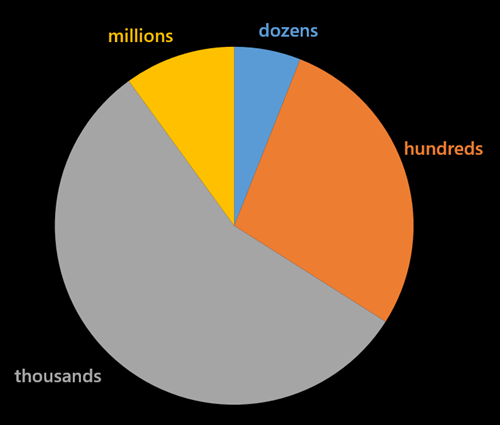

A heartfelt thanks to the 125,000+ visitors (and 207,000+ page views!) this year to the blog. It’s always a pleasure to meet many of you in real life, or in other virtual forums like Twitter. Here’s to the year ahead, and more opportunities to be inspired by technology and the the people around us.

Many cloud providers focus on the “acquire stuff” experience and leave the “manage stuff” experience lacking. Whether your cloud resources live for 3 days or three years, there are maintenance activities. CenturyLink Cloud lets you create account hierarchies to represent your org, organize virtual servers into “groups”, act on those servers as a group, see cross-DC server health at a glance, and more. It’s a focus of this platform, and it differs from most other clouds that give you a flat list of cloud servers per data center and a limited number of UI-driven management tools. With the rise of configuration management as a mainstream toolset, platforms with limited UIs can still offer robust means for managing servers at scale. But, CenturyLink Cloud is focused on everything from account management and price transparency, to bulk server management in the platform.

Many cloud providers focus on the “acquire stuff” experience and leave the “manage stuff” experience lacking. Whether your cloud resources live for 3 days or three years, there are maintenance activities. CenturyLink Cloud lets you create account hierarchies to represent your org, organize virtual servers into “groups”, act on those servers as a group, see cross-DC server health at a glance, and more. It’s a focus of this platform, and it differs from most other clouds that give you a flat list of cloud servers per data center and a limited number of UI-driven management tools. With the rise of configuration management as a mainstream toolset, platforms with limited UIs can still offer robust means for managing servers at scale. But, CenturyLink Cloud is focused on everything from account management and price transparency, to bulk server management in the platform.